The precision with which numbers are represented shapes the clarity and impact of communication, particularly in mathematical contexts where accuracy is key. Understanding how to convert quantities into decimal form is a foundational skill that underpins countless applications across disciplines. Still, whether navigating scientific measurements, financial transactions, or everyday calculations, mastering this conversion ensures that numerical information is not only understood but also effectively communicated. In this exploration, we walk through the nuances of translating whole numbers into decimal representation, emphasizing the importance of precision and the practical implications of such skills. Such proficiency serves as a cornerstone for both academic pursuits and professional endeavors, where even minor inaccuracies can have significant consequences. The process itself demands careful attention to detail, requiring a commitment to clarity and precision that transcends mere technical execution. Through this lens, we uncover not only the mechanics of conversion but also the broader significance of maintaining accuracy in data-driven environments.

Understanding Decimal Conversion: A Foundation for Precision

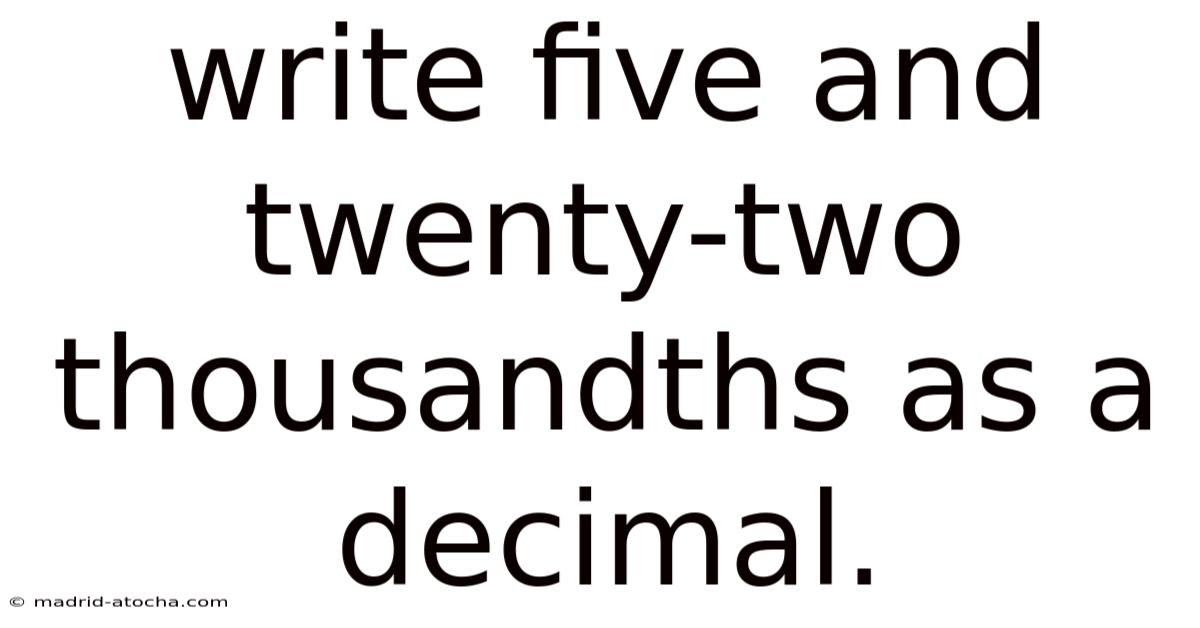

At the heart of numerical representation lies the decimal system, a framework that allows for the expression of numbers in terms of whole numbers, fractions, and percentages. When converting a given value into a decimal, the process involves breaking down the number into its constituent parts, ensuring that each component is accurately accounted for. Take this case: consider the task at hand: transforming five and twenty-two thousandths into a decimal. This specific example serves as a gateway to grasping the broader principles applicable to all numerical transformations

Understanding Decimal Conversion: A Foundation for Precision

At the heart of numerical representation lies the decimal system, a framework that allows for the expression of numbers in terms of whole numbers, fractions, and percentages. As an example, consider the task at hand: transforming five and twenty-two thousandths into a decimal. When converting a given value into a decimal, the process involves breaking down the number into its constituent parts, ensuring that each component is accurately accounted for. This specific example serves as a gateway to grasping the broader principles applicable to all numerical transformations Worth keeping that in mind. Practical, not theoretical..

The first step is to isolate the whole number portion. In our example, that’s simply “five.” Next, we focus on the fractional part – "twenty-two thousandths." This represents a quantity smaller than one, and its conversion to a decimal requires understanding the place value system. Thousandths represent one-thousandth (1/1000), ten-thousandths (1/10000), and so on. That's why, twenty-two thousandths translates to 22/10000 Easy to understand, harder to ignore. Worth knowing..

To convert this fraction to a decimal, we divide 22 by 10000. This division yields 0.Day to day, 0022. The trailing zeros after the decimal point are added for completeness, ensuring the full value of the original number is preserved. Finally, we combine the whole number and the decimal portion, resulting in the final decimal representation: 5.0022. This methodical breakdown, applying the principles of place value, is a fundamental technique applicable to a wide array of numerical conversions Most people skip this — try not to. But it adds up..

Beyond simple conversions, dealing with repeating decimals requires a slightly different approach. So these are typically expressed using a bar over the repeating digits (e. Adding to this, recognizing terminating decimals (those with a finite number of digits after the decimal point, like 0.So 333... ). Here's the thing — understanding this concept is crucial for accurate calculations and avoids potential misinterpretations. Still, g. Numbers like 1/3 or 2/9 result in decimal representations that continue infinitely, exhibiting a repeating pattern. Worth adding: , 1/3 = 0. 25) versus repeating decimals helps in determining the efficiency of calculations and potential rounding errors And that's really what it comes down to..

Easier said than done, but still worth knowing.

The practical implications of precise decimal conversion extend far beyond the classroom. That's why in scientific research, accurate measurements are critical for reliable results. Plus, a slight error in converting a measurement can propagate through calculations, leading to flawed conclusions. But consider the impact of an incorrect decimal in a transaction – it could represent a substantial difference in profit or loss. Worth adding: similarly, in finance, even minor discrepancies in decimal amounts can have significant financial consequences. Engineering, architecture, and manufacturing all rely heavily on precise numerical conversions to ensure the safety and functionality of designs and products.

Pulling it all together, the ability to convert between whole numbers and decimal representation is not merely a mathematical exercise; it is a fundamental skill underpinning accuracy and clarity in numerous disciplines. By understanding the principles of place value, fractions, and repeating decimals, we can confirm that numerical information is communicated and utilized effectively. Mastering this conversion process fosters a deeper appreciation for the precision required in data-driven environments and empowers us to make informed decisions based on accurate numerical representations. The commitment to detail in decimal conversion ultimately contributes to more reliable outcomes and enhanced communication across all facets of life Easy to understand, harder to ignore..

The advent of digital computing has further amplified the significance of decimal precision. In practice, this necessitates a solid understanding of decimal conversion principles to interpret computational outputs correctly, recognize potential inaccuracies, and apply appropriate rounding strategies meant for the specific application's tolerance levels. While computers handle vast numerical datasets, their internal representation of decimals (typically using binary floating-point formats) can introduce subtle rounding errors, especially with non-terminating decimals or very large/small numbers. To give you an idea, in high-frequency trading or complex simulations, these minute errors can accumulate and lead to significant deviations over time.

Also worth noting, the rise of big data analytics underscores the need for dependable decimal handling. Processing massive datasets requires efficient algorithms that maintain numerical integrity throughout calculations. , fixed-point vs. And g. Understanding whether a decimal is terminating or repeating isn't just academic; it informs the choice of data types (e.floating-point) and computational methods to minimize error propagation and ensure the reliability of statistical models and derived insights derived from the data.

Not obvious, but once you see it — you'll see it everywhere.

Pulling it all together, the seemingly straightforward process of converting between whole numbers and decimals forms an indispensable pillar of numerical literacy. Its mastery transcends basic arithmetic, serving as the bedrock for scientific discovery, financial integrity, engineering excellence, and the effective harnessing of computational power. As data becomes increasingly central to decision-making across all domains, the ability to accurately represent, manipulate, and interpret decimal values remains very important. By internalizing the principles of place value, fraction equivalence, and the nuances of repeating decimals, we equip ourselves with the tools to work through a world where precision dictates reliability, and accurate numerical representation is the language of truth in an information-driven age. The commitment to mastering these fundamental conversions is an investment in clarity, accuracy, and informed judgment in an increasingly complex numerical landscape The details matter here..

In modern technological landscapes, leveraging data-driven environments becomes essential for transforming raw information into actionable insights. The precision embedded in these conversions directly influences the trustworthiness of the results, especially when decisions hinge on minute numerical differences. These systems rely heavily on precise decimal conversions to confirm that every figure translates consistently across platforms, whether it's software interfaces, scientific tools, or everyday applications. As we continue to integrate advanced analytics into our daily workflows, honing our understanding of decimal representation becomes not just a skill but a necessity.

Beyond the technicalities, the application of accurate decimal handling fosters clearer communication between stakeholders. When data is presented with consistent numerical standards, it bridges gaps in comprehension and reduces ambiguity. This uniformity is crucial in fields ranging from healthcare research to financial forecasting, where clarity can mean the difference between safe decisions and costly mistakes. Embracing this practice ensures that all parties involved interpret data in the same light, reinforcing collaborative efforts and strategic planning Small thing, real impact..

What's more, the evolving complexity of data ecosystems demands a vigilant approach to decimal management. Because of that, recognizing the patterns in repeating decimals or the implications of binary formats allows professionals to preempt potential issues before they escalate. As algorithms become more sophisticated, the underlying mathematics must adapt to maintain integrity. By staying ahead of these challenges, we reinforce the stability and dependability of our technological frameworks Easy to understand, harder to ignore..

Simply put, mastering the nuances of decimal conversions is more than an academic exercise; it is a cornerstone of competence in today’s data-centric world. The journey towards precision in numerical representation ultimately shapes our ability to make informed decisions, adapt to change, and thrive in an era where numbers define outcomes. It empowers us to deal with uncertainty with confidence, uphold standards of accuracy, and drive progress across disciplines. Embracing this responsibility strengthens our capacity to lead and innovate in every aspect of life.